EmbodiedSplat 🛋️: Online Feed-Forward Semantic 3DGS

for Open-Vocabulary 3D Scene Understanding

EmbodiedSplat 🛋️: Online Feed-Forward Semantic 3DGS

for Open-Vocabulary 3D Scene Understanding

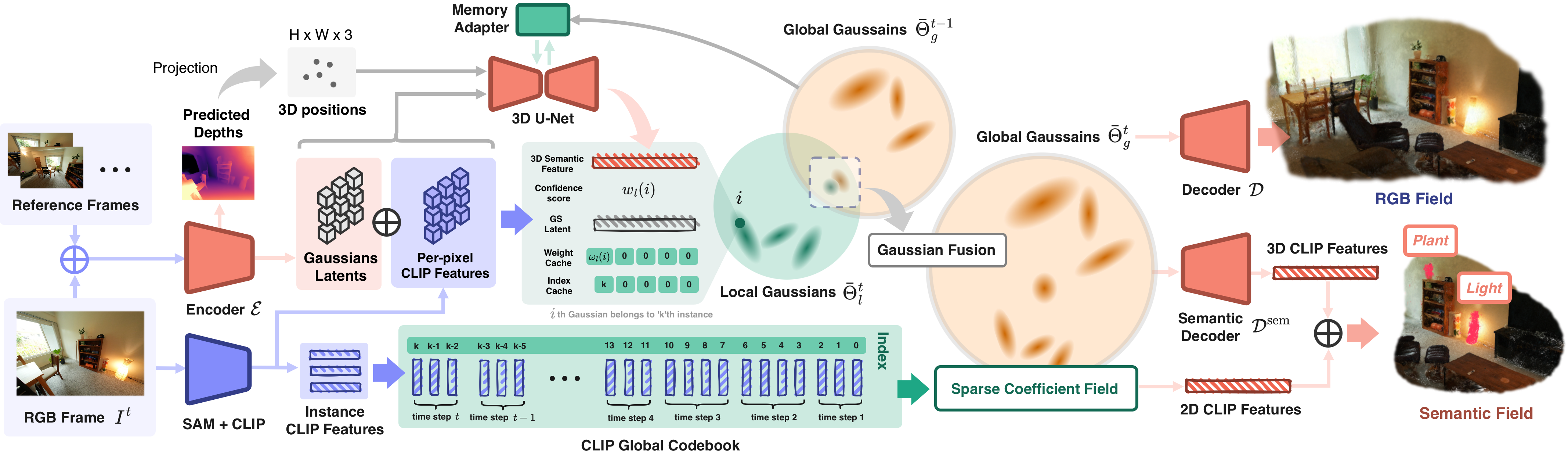

Overall framework of EmbodiedSplat. We endow feed-forward 3DGS with semantic understanding capabilities by binding the two types of CLIP features: 1) 2D Semantic Features are attached to each Gaussian via Sparse Coefficient Field with CLIP Global Codebook, effectively reducing memory consumption while preserving semantic generalizability of CLIP. 2) 3D Geometric-aware Features are produced by aggregating the feature point cloud of 3DGS through 3D U-Net and temporal-aware memory adapter. These two types of features enable mutual compensation between semantic and 3D geometry, which results in superior understanding capabilities compared to the existing baselines.

@article{lee2026embodiedsplat,

title={EmbodiedSplat: Online Feed-Forward Semantic 3DGS for Open-Vocabulary 3D Scene Understanding},

author={Lee, Seungjun and Wang, Zihan and Wang, Yunsong and Lee, Gim Hee},

journal={arXiv preprint arXiv:2603.04254},

year={2026}

}